Paper - The Theory and Practice of Induction by Alignment

Abstract

Induction is the discovery of models given samples.

This paper demonstrates formally from first principles that there exists an optimally likely model for any sample, given certain general assumptions. Also, there exists a type of encoding, parameterised by the model, that compresses the sample. Further, if the model has certain entropy properties then it is insensitive to small changes. In this case, approximations to the model remain well-fitted to the sample. That is, accurate classification is practicable for some samples.

Then the paper derives directly from theory a practicable unsupervised machine learning algorithm that optimises the likelihood of the model by maximising the alignment of the model variables. Alignment is a statistic which measures the law-likeness or the degree of dependency between variables. It is similar to mutual entropy but is a better measure for small samples. If the sample variables are not independent then the resultant models are well-fitted. Furthermore, the models are structures that can be analysed because they consist of trees of context-contingent sub-models that are built layer by layer upwards from the substrate variables. In the top layers the variables tend to be diagonalised or equational. In this way, the model variables are meaningful in the problem domain.

If there exist causal alignments between the induced model variables and a label variable, then a semi-supervised sub-model can be obtained by minimising the conditional entropy. Similar to a Bayesian network, this sub-model can then make predictions of the label.

The paper shows that this semi-supervised method is related to the supervised method of optimising artificial neural networks by least-squares gradient-descent. That is, some gradient-descent parameterisations satisfy the entropy properties required to obtain likely and well-fitted neural nets.

Overview

The ‘Overview’ section covers the important points of the theory and some interesting parts of the practice. The overview also has a summary of the set-theoretic notation used throughout.

Overview pdf (92 pages) (versions)

Paper

Although this document is still in the format of a paper, it has grown to be the length of a book. In order to be more accessible there is an ‘Overview’ section at the beginning. The complete theory and various practical implementations are in the following sections. The section ‘Induction’ also begins with a review of relevant parts of the earlier sections. The paper finishes with some appendices on various related issues, including an appendix ‘Useful functions’.

Paper pdf (1185 pages) (versions)

Code - implementation of Aligned Induction

The Alignment repository is an implementation, written in the Haskell programming language, of some of the set-theoretic functions and structures described in the paper, including the model framework and practicable inducers. It is designed with the goal of theoretical correctness rather performance.

The AlignmentPy repository has similar functionality, but implemented in the Python programming language.

The AlignmentC repository has a subset of the functionality, implemented in modern C++. It is designed for permformance.

Documentation - commentary on the Overview

Some of the sections of the Overview have been illustrated with a Haskell commentary using the Alignment repository. The comments provide both (a) code examples for the paper and (b) documentation for the code. The examples are designed to be comprehensible to non-programmers.

Similarly, the Python commentary uses the AlignmentPy repository.

Documentation - commentary on the implementation of Tractable and Practicable Inducers

For programmers who are interested in implementing inducers, some of the sections of the paper have been expanded in a Code commentary with links to documentation of the code in the Alignment repository and AlignmentPy repository. The code documentation is gathered together in Haskell code and Python code.

Code - fast implementation of Aligned Induction

The AlignmentRepa repository is a fast Haskell and C implementation of some of the practicable inducers described in the paper. The AlignmentRepa repository depends on the Alignment repository for the underlying model framework. The slower implementations of some of the practicable inducers in the Alignment repository can be used to verify the correctness of equivalent faster implementations in AlignmentRepa.

The AlignmentRepaPy repository is a fast Python and C implementation.

The AlignmentRepaC repository is a fast modern C++ implementation. It is designed to manipulate large models while minimising memory and maximising performance.

Documentation - commentary on the implementation of fast Practicable Inducers

The Haskell implementation of fast Practicable Inducers discusses the implementation of the inducers using the AlignmentRepa repository. Also, the Python implementation of fast Practicable Inducers discusses the implementation of the inducers using the AlignmentRepaPy repository.

Dataset Analysis - UCI Machine Learning Repository Mushroom Data Set

The UCI Machine Learning Repository Mushroom Data Set is a popular dataset often used to test machine learning algorithms.

We analyse this dataset with Haskell using the MUSH repository which depends on the AlignmentRepa repository. We consider (a) predicting edibility without modelling, (b) predicting odor without modelling, (c) manual modelling of edibility and (d) induced modelling of edibility. The practicable model induction is described here.

We also analyse this dataset with Python using the MUSHPy repository which depends on the AlignmentRepaPy repository. We consider (a) predicting edibility without modelling, (b) predicting odor without modelling, (c) manual modelling of edibility and (d) induced modelling of edibility. The practicable model induction is described here.

Dataset Analysis - Ames House Prices

The Ames Housing dataset describes the sale of individual residential property in Ames, Iowa from 2006 to 2010. It was compiled by Dean De Cock for use in data science education.

We analyse this dataset with Haskell using the AMES repository which depends on the AlignmentRepa repository. We consider (a) Predicting sale price without modelling and (b) Induced modelling of sale price. The practicable model induction is described here.

We also analyse this dataset with Python using the AMESPy repository which depends on the AlignmentRepaPy repository. We consider (a) Predicting sale price without modelling and (b) Induced modelling of sale price. The practicable model induction is described here.

Dataset Analysis - MNIST handwritten digits

The MNIST dataset contains binary images of handwritten digits.

We analyse this dataset with Haskell using the NIST repository which depends on the AlignmentRepa repository. There is a summary here.

We also analyse this dataset with Python using the NISTPy repository which depends on the AlignmentRepaPy repository. There is a summary here.

The NISTC repository is the high performance implementation which depends on the AlignmentRepaC repository.

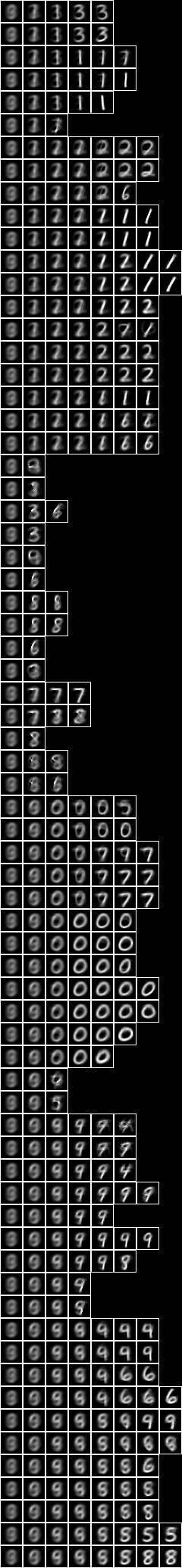

This is an example of a classification of the dataset by one of the models -

Dataset Analysis - MOFC M5 Forecasting

The M Open Forecasting Center (MOFC) M5 Competition aims to forecast the unit sales of Walmart retail goods.

The M5FA repository is the high performance implementation which depends on the AlignmentRepaC repository.

Dataset Analysis - TBOT01 - TurtleBot3 controller

TurtleBot3 is a Robot Operating System standard platform robot. Here we develop a TurtleBot3 controlled by unsupervised induced models, as well as by partially or wholly supervised conditioned models. In the TBOT01 repository we demonstrate that it is possible for the TurtleBot3 to learn about its environment without any predefined label or goal, and then to apply this knowledge to accomplish a task. See the discussion here.

This is an example of a classification of lidar scans by one of the models -

Code - Active Aligned Induction

The AlignmentActive repository brings together a history and a model to define a thread-safe structure for real-time aligned induction. The AlignmentActive repository depends on the AlignmentRepaC repository .

Dataset Analysis - TBOT02 - TurtleBot3 dynamic controller

In TBOT02 we extend the turtlebot developments in TBOT01 to implement dynamic modelling. That is, instead of (1) acquiring history, (2) modelling, and (3) applying model in three different stages, all are done concurrently using the AlignmentActive repository.

Dataset Analysis - TBOT03 - TurtleBot3 slice topology controller

In TBOT03 we extend the turtlebot developments in TBOT01 and TBOT02 to examine slice topologies and motor actions.

Dataset Analysis - WBOT02 - Qt Wotbot

In TBOT01, TBOT02 and TBOT03 we investigated real-time modelling of a Turtlebot, a small wheeled robot with a lidar. We experimented with it by running various controllers in the turtlebot3 house simulated in the Gazebo virtual environment. In WBOT02 we take a step in a different direction - Wotbot will focus on real-time modelling of vision, hearing and speech implemented in a smartphone. That is, Wotbot will be a robot with a head, rather than a body, at least in its initial versions. In this project we have developed models mainly using Film Noir videos.

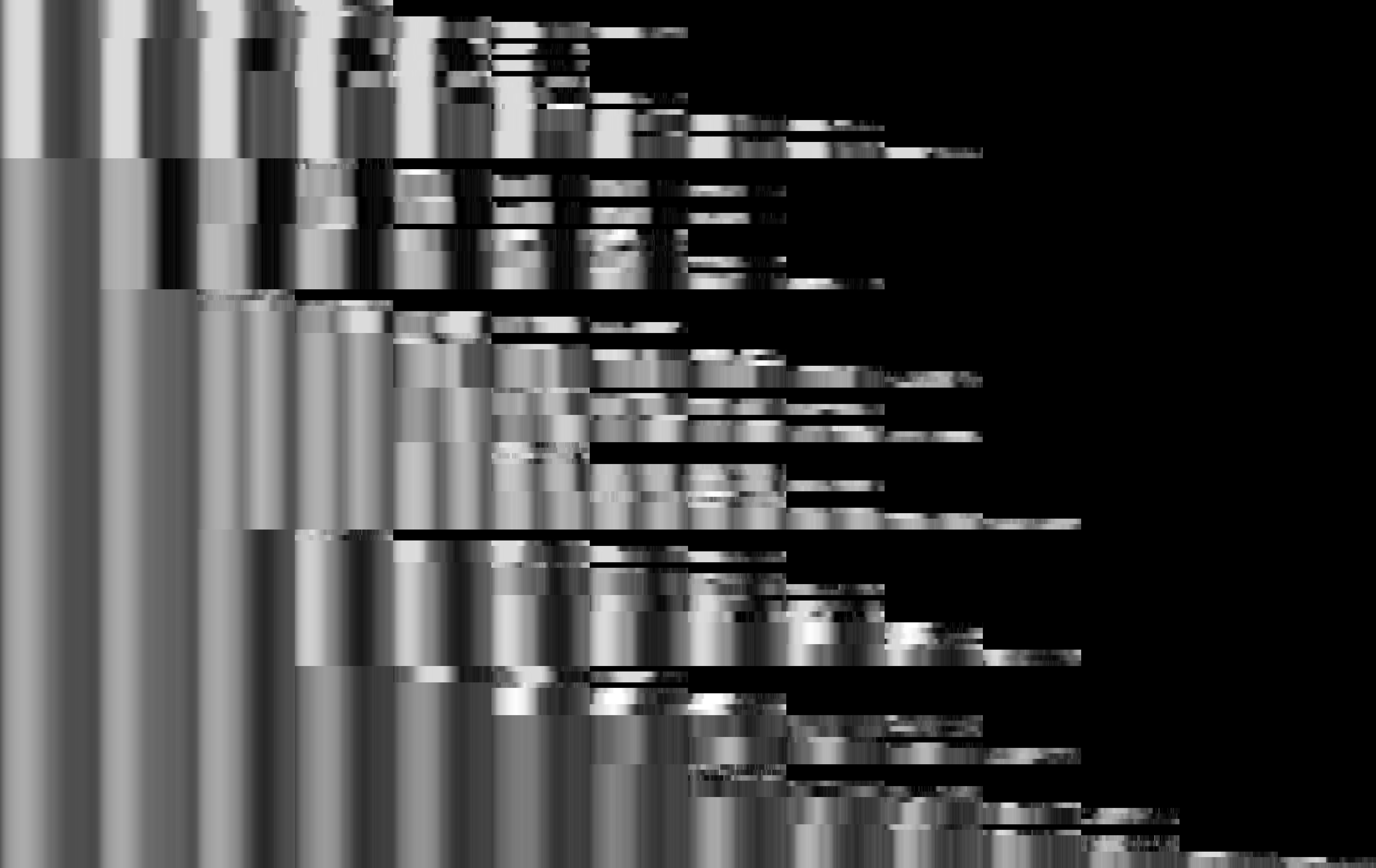

This image has been augmented with one of the wotbot models. Here we have highlighted the locations with the longest decomposition paths, i.e. the most interesting locations. The colour indicates how closely related the locations are in the model -